So, last time I said vibe coding makes me stop thinking, guess what? In certain cases, things are even worse.

There is no flow state

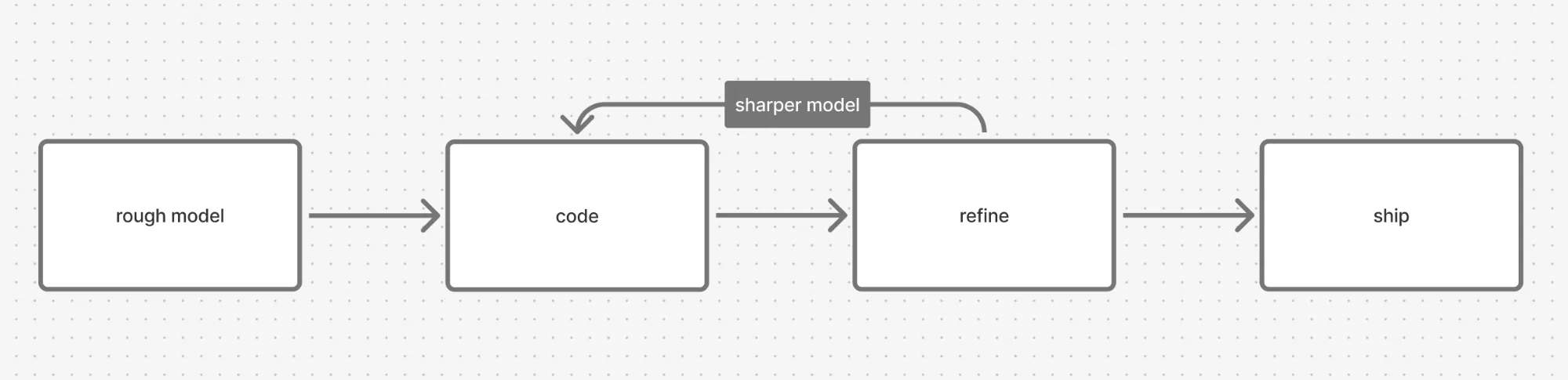

Normally, when I go "cave mode", a programming session looks like this:

One context, one head, uninterrupted flow.

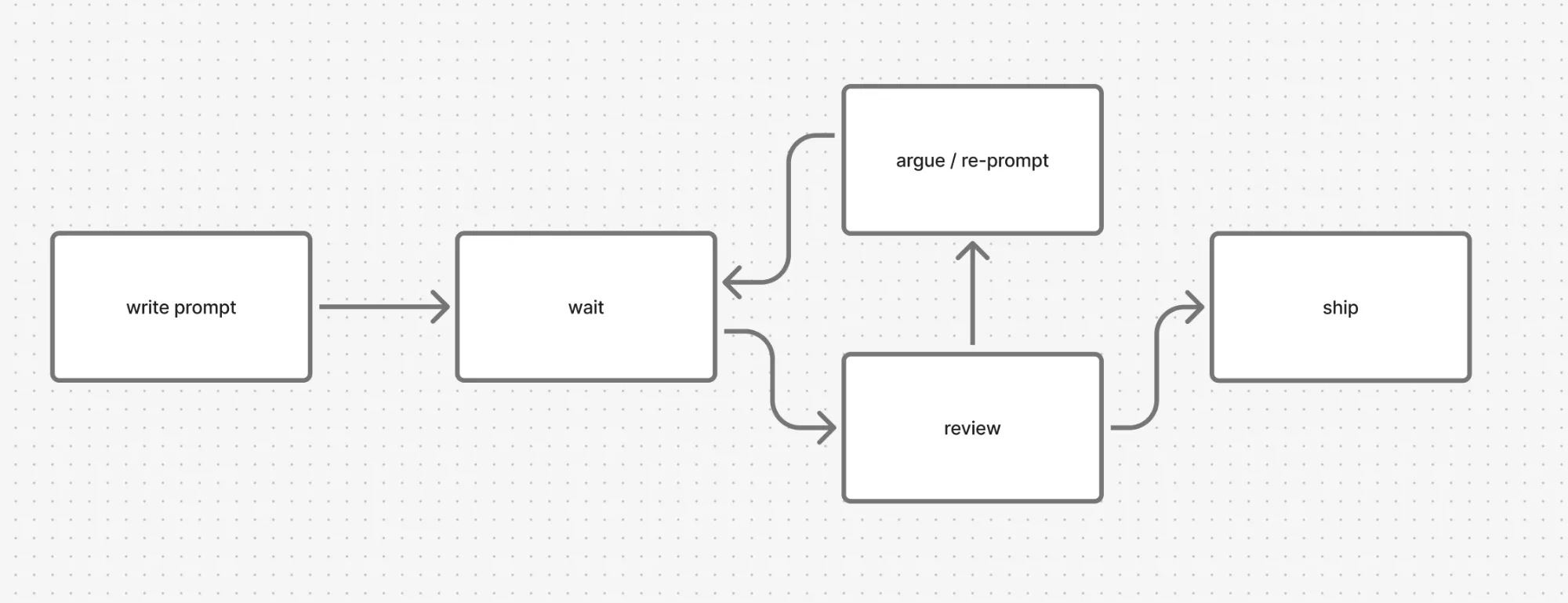

Now, with vibe coding, it looks like this:

In cave mode, building the mental model doesn't really stop at the rough mental model. I start with one, sure, and when I get stuck I go back to it. But I never leave the context. Every line I write tests an assumption, every edge case sharpens the mental model a bit more. Building the mental model and coding are the same thread.

Vibe coding cuts that thread. I'm not the one typing, so the only window where my mental model can grow is the plan. After that the agent goes off and writes in my name, and my understanding just stops while the codebase doesn't.

The plan can't see what matters

On small and contained work, AI really is faster, but on anything inside a big monolith, the speedup vanishes and the work just changes shape: the part where I build the mental model is gone, replaced by review, argue, and frankly, cursing the shit out of it. Devs know 🤷♂️.

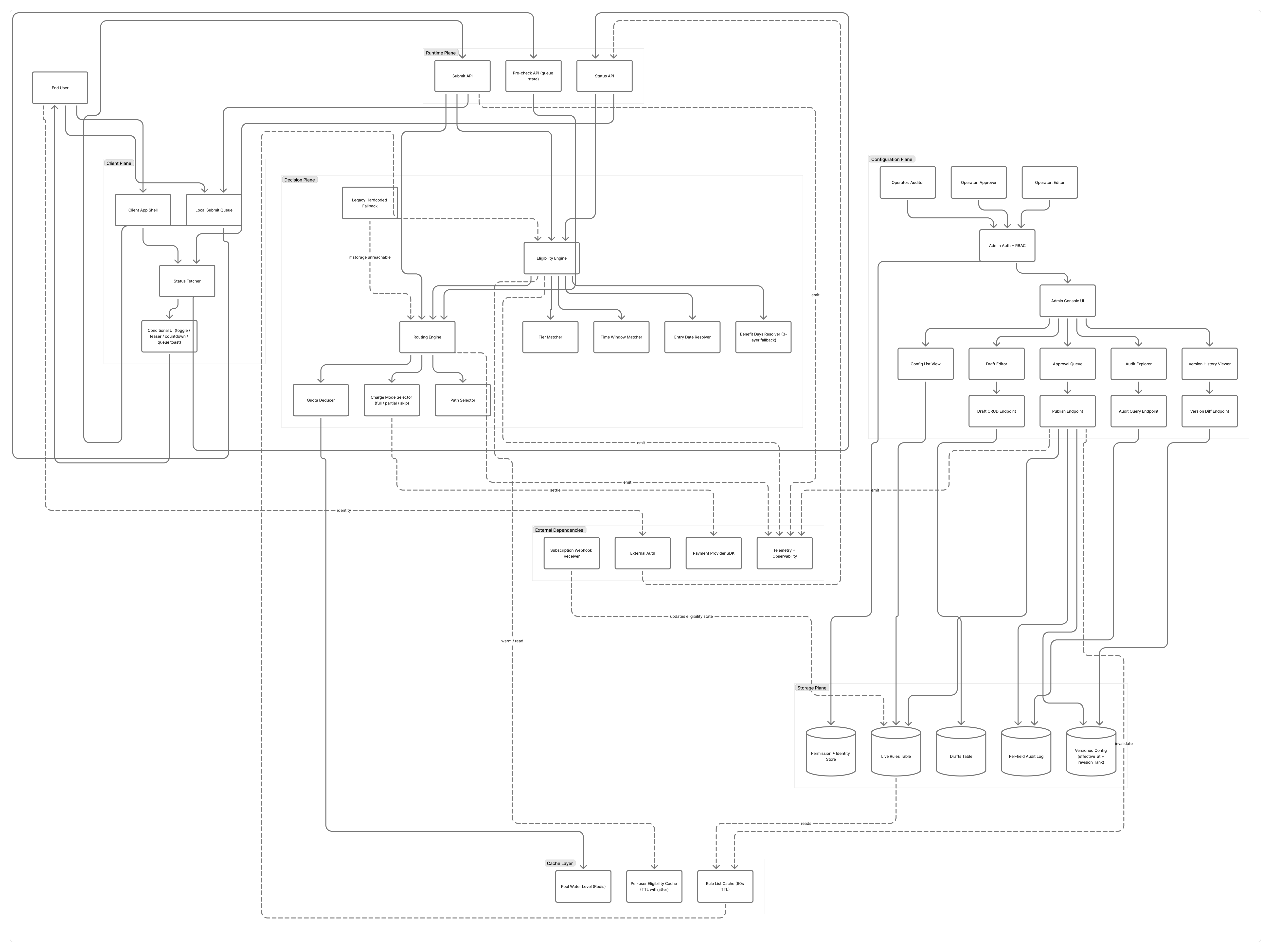

Like I said in pt.1, plan mode isn't upfront thinking. On the surface it looks like it is, the agent reads some files, gives me a plan, I approve, then I grab a coffee or something and let it cook, chill as fuck, right? But that plan was built from whatever fit in the context window, and even today's LLM context windows are still severely limited. They can't hold a whole codebase, not to mention that some coding agents tend to load even less. So the plan can't see the invariants in my head, the reasoning buried in commit messages, the constraints discussed in Lark threads months ago. Once I approve, a wrong premise could quietly become the foundation.

And review won't save you

src/api/handlers/orders.ts | 892 +++++++++++++++++++++++-----------

src/services/inventory.ts | 421 ++++++++++++++++++++--------------

src/db/migrations/0042_orders.sql | 287 ++++++++++++++++++++

src/components/Dashboard.tsx | 248 +++++++++++++++--------

...

138 files changed, 4577 insertions(+), 3212 deletions(-)

That's what every argue cycle later is trying to correct, and the cost is higher than it looks, because I can't build the mental model at the same time. Prompt-writing and problem-modeling fight for the same brain space. Writing a prompt pulls what's in my head out into words, while building a mental model pulls the problem in. I can't do both at once, and every prompt-wait-output cycle wipes out a bit of the mental model I was holding.

And even if the mental model is somewhat clear, on a large codebase or a huge monolith, I probably won't review the code line by line, in most cases, I won't even look at a single line, yet still hope that everything is working as planned. Then I deliver it to the test team, and there goes another nightmare.

AI is a junior, and stays a junior

It clicked when I started thinking of AI as a junior. Except this junior writes way more lines than any human, never asks when in doubt, makes things up confidently, doesn't remember what I taught yesterday, and doesn't reflect on mistakes.

Teaching a junior is tiring, but at least it goes somewhere. They grow up, and after a year they're part of the system rather than a load on it. Teaching AI goes nowhere: every session starts cold, every fix gets re-broken next week, and the review load that would normally spread across ten juniors all lands on one senior.

The team ceiling becomes the AI ceiling

If the senior is the only one still holding the mental model, and the senior gets ground down by argue-loops, the team's correction capacity drops to whatever the AI itself can do.

Which is: surface-level pattern matching on whatever happens to be in the context window.

AI doesn't see invariants. It can't see why a try/catch was added after an incident like 2 years ago, or why a field is non-nullable because a downstream pipeline crashes if it isn't, or why two endpoints exist for what looks like the same data because one is for one client and the other is for another client.

These invariants live in heads, in Lark, in commit messages, in the people who left last quarter. AI plans look reasonable and miss them anyway. Then the plan gets approved, shipped, and rewritten three months later by a different agent that also doesn't see them.

I've watched modules go through three "definitive" rewrites in a year for this exact reason. Every rewrite was locally correct, every rewrite was globally wrong, and none of them talked to each other.

We're trapped

The cost of vibe coding isn't the bad code it produces, bad code is recoverable. The cost is what happens to me while supervising it.

Reflection time gets eaten, mental models stop forming, and six months in the senior is busier and producing shallower work, while the team's actual ceiling is silently dropping toward the coding agent's.

I know there's a balance to strike with this stuff, and I just need time to find it.

Comments

No comments yet. Be the first.

Loading comments…